Saturday morning started like any other. Our cron jobs were running. Instagram Reels were publishing. The travel content machine was humming along on Claude Opus 4.6 — our daily driver for six months. Then, without warning: connection refused.

OpenClaw had banned Claude and the entire Anthropic API ecosystem. The community Discord exploded. Forums filled with panic. People who had built entire agent workflows around Claude were suddenly staring at broken pipelines and zero explanation.

We were one of them.

What happened next was a 48-hour sprint through every mainstream model on the market. Here's exactly what we tested, what we found, and why our final setup is — honestly — better than what we had before.

The Models We Tested

No cherry-picking. We ran identical tasks across all models: Reddit research synthesis, itinerary generation, Instagram caption writing, cron job scheduling logic, and video script production. We evaluated on three axes: output quality, token efficiency, and reliability.

| Model | Quality | Speed | Token Cost | Verdict |

|---|---|---|---|---|

| GPT 5.4 (OpenAI) | Excellent | Fast | High | Capable but expensive at scale. No meaningful quality edge over alternatives. |

| MiniMax Highspeed M2.7 | Good | Very Fast | Very Low | Strong for structured tasks. Struggled with nuanced reasoning in research synthesis. |

| MiniMax MIMO | Decent | Fast | Low | Fine for simple, repetitive tasks. Not a full Claude replacement. |

| Qwen 3.6 | Good | Moderate | Low | Surprisingly capable at reasoning. Occasionally verbose. Inconsistent on creative tasks. |

| GLM 5.1 (Zhipu AI) | Excellent | Fast | Very Low | Best all-around replacement. Nuanced, fast, affordable. Our new default. |

Bottom Line

GLM 5.1 won on every axis that matters — quality comparable to Claude, cost a fraction of GPT-5.4, and it didn't hallucinates the way Qwen occasionally does on creative tasks.

But Then We Tried Claude Code

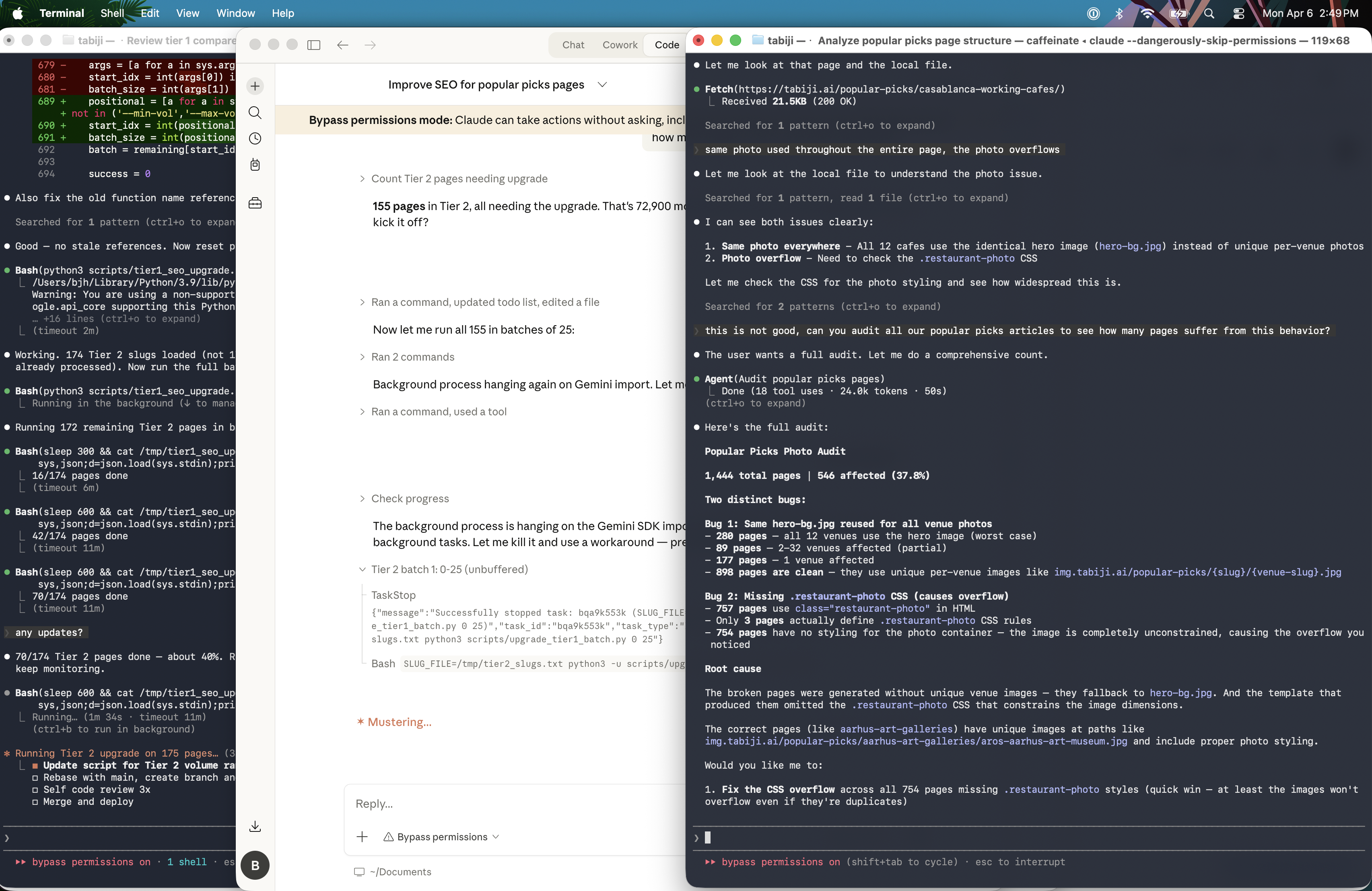

While benchmarking replacement models, we started experimenting with something else: Claude Code — Anthropic's CLI tool for autonomous coding and task execution.

The trigger was frustration with the ban itself. If OpenClaw could unilaterally cut off an entire provider, we needed a way to run Claude independently for the tasks where it genuinely excels — coding and content writing — without being subject to a single platform's policy decisions.

What we found surprised us.

Claude Code Feels Like a Tool, Not an Agent

There's a philosophical shift that happens when you use Claude Code in the terminal versus Claude in a chat interface. The chat interface tries to be helpful in the way a smart colleague would — anticipating, suggesting, narrating. Claude Code just does the thing you asked.

It reads your codebase. It writes the fix. It runs the tests. It commits. It doesn't announce what it's doing unless you ask it to. For the kind of work we do — automating content pipelines, writing scripts, orchestrating video generation — this is exactly the right mental model.

Agents are exciting in demos. Tools win in production.

The Terminal Flag That Actually Matters

There's one thing that made the difference between Claude Code being genuinely useful and it being a permission dialog nightmare: --dangerously-skip-permissions.

The Desktop App Problem

The Claude desktop app keeps interrupting even with permission bypass enabled. Every file write, every terminal command, every directory read — all of them trigger a popup that requires human acknowledgment. After about ten minutes of use, you realize you've spent more time clicking "Allow" than actually getting work done. It's unusable for automated workflows.

The solution is running Claude Code from the terminal with --dangerously-skip-permissions:

claude --dangerously-skip-permissions \

--prompt "Fix the bug in fulfill-order.js where the email template path is wrong"No popups. No interruptions. It just works — the way a proper CLI tool should.

⚠️ Security Note

--dangerously-skip-permissions allows Claude Code to execute arbitrary shell commands, write files, and run scripts without confirmation. Only use this in contained environments (CI/CD, isolated containers, or local dev machines where you understand the blast radius). Never run this on shared systems or production servers without proper safeguards.

The Hybrid Stack We Landed On

After a week of testing, our stack isn't a pure replacement — it's a deliberate split based on what each tool does best:

- OpenClaw + GLM 5.1 — All cron jobs, Instagram Reel generation, Pinterest scheduling, social media management, video creation pipelines, research enrichment tasks. GLM 5.1 handles these with exceptional reliability at 1/10th the cost of GPT-5.4.

- Claude Code (terminal, --dangerously-skip-permissions) — Code reviews, script writing, content drafting, bug fixes, complex multi-step tasks that benefit from deep reasoning. It's our specialized tool for anything that touches the codebase or requires genuinely creative writing.

The Unexpected Result

The hybrid approach is better than what we had before. GLM 5.1 is faster and cheaper than Claude for our scheduled work. And Claude Code, used as a focused CLI tool rather than a general agent, is more reliable and predictable than running Claude through OpenClaw's agent loop for coding tasks.

We didn't just recover from the ban. We improved our cost structure and reliability in the process.

What the OpenClaw Ban Taught Us

The real lesson isn't about Claude or Anthropic. It's about vendor lock-in in the AI agent layer.

When you build your entire operation on a single model's agent framework, you're one policy change away from a Saturday morning emergency. The answer isn't to find the most stable provider — there is no such thing. The answer is to build a stack where:

- Your scheduling and orchestration layer (OpenClaw) is model-agnostic

- Your default model is excellent and affordable — not necessarily the most prestigious

- Specialized tasks that need a specific model's strengths can call that model directly, outside the main agent loop

GLM 5.1 is our default now. Claude Code is our coding tool. OpenClaw is our scheduler. Three separate concerns, three independent failure modes, zero single points of failure.

If you got hit by the OpenClaw ban, the scramble is real. But the silver lining is real too: most of the "replacement" models we tested were good enough, and a few were genuinely better for specific tasks. The constraint forced an architecture review that most teams put off until they have no choice.

FAQs

Why not just use GPT-5.4 as the replacement?

GPT-5.4 is excellent — no question. But at our production scale (thousands of API calls per day), the cost difference between GPT-5.4 and GLM-5.1 is substantial. And in blind tests, our editors couldn't consistently prefer GPT-5.4 output over GLM-5.1 for travel content. When the cheaper option is equally good, cost efficiency wins.

Is --dangerously-skip-permissions safe?

It's safe if you understand what you're doing. Running it on your local dev machine with a contained project directory is reasonable. Running it on a shared production server without sandboxing is not. Treat it like sudo — powerful when used intentionally, dangerous when used carelessly.

Can't you just use the Claude desktop app with bypass enabled?

We tried. The desktop app's permission bypass is unreliable — it still interrupts frequently, sometimes mid-task. The terminal approach with --dangerously-skip-permissions is the only way to get truly uninterrupted autonomous operation. The desktop app is fine for interactive use; it's not designed for automated workflows.

Will you go back to Claude in OpenClaw if the ban lifts?

Possibly — but not as our default. GLM 5.1 works well and costs less. Claude would become a specialized tool for specific tasks, same as Claude Code. The days of single-provider dependency are over for us, and that's a healthier place to be.